The formulas for the likelihood ratios are below. Unlike predictive values, and similar to sensitivity and specificity, likelihood ratios are not impacted by disease prevalence. A negative likelihood ratio or LR-, is “the probability of a patient testing negative who has a disease divided by the probability of a patient testing negative who does not have a disease.”. In other words, an LR+ is the true positivity rate divided by the false positivity rate. A positive likelihood ratio, or LR+, is the “probability that a positive test would be expected in a patient divided by the probability that a positive test would be expected in a patient without a disease.”. LRs allow providers to determine how much the utilization of a particular test will alter the probability. Likelihood ratios (LRs) represent another statistical tool to understand diagnostic tests. Considering all of the diagnostic test outputs, issues with results (e.g., very low specificity) may make clinicians reconsider clinical acceptability, and alternative diagnostic methods or tests should be considered. Providers should consider the sample when reviewing research that presents these values and understand that the values within their population may differ. When a disease is highly prevalent, the test is better at ‘ruling in' the disease and worse at ‘ruling it out.’ Therefore, disease prevalence should also merit consideration when providers examine their diagnostic test metrics or interpret these values from other providers or researchers. Negative Predictive Value=(True Negatives (D))/(True Negatives (D)+False Negatives(C))ĭisease prevalence in a population affects PPV and NPV. Positive Predictive Value=(True Positives (A))/(True Positives (A)+False Positives (B))

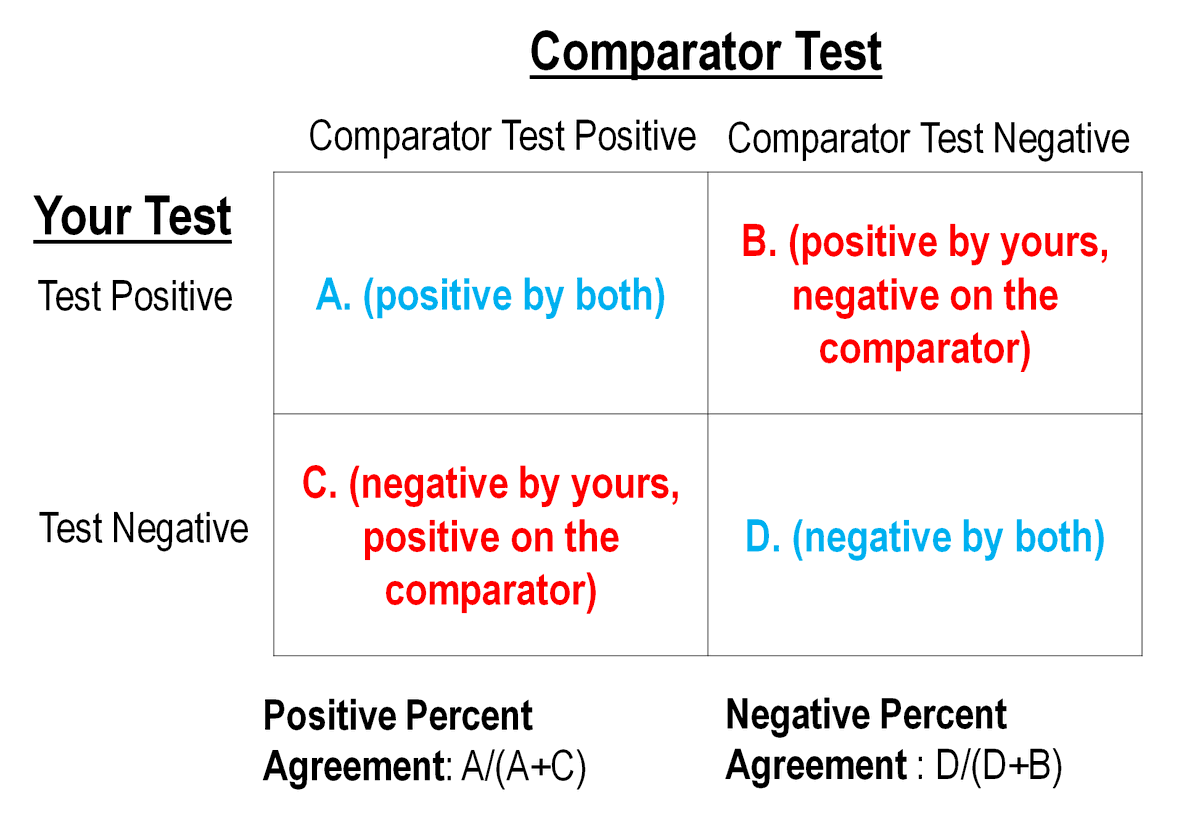

As the value increases toward 100, it approaches a ‘gold standard.’ The formulas for PPV and NPV are below. PPVs determine, out of all of the positive findings, how many are true positives NPVs determine, out of all of the negative findings, how many are true negatives. Next, it is important to understand PPVs and NPVs. Sensitivity and specificity should always merit consideration together to provide a holistic picture of a diagnostic test. Highly sensitive tests will lead to positive findings for patients with a disease, whereas highly specific tests will show patients without a finding having no disease. Sensitivity and specificity are inversely related: as sensitivity increases, specificity tends to decrease, and vice versa. Specificity=(True Negatives (D))/(True Negatives (D)+False Positives (B)) The formula to determine specificity is the following: In other words, it is the ability of the test or instrument to obtain normal range or negative results for a person who does not have a disease. Specificity is the percentage of true negatives out of all subjects who do not have a disease or condition. False positives are a consideration through measurements of specificity and PPV. Sensitivity does not allow providers to understand individuals who tested positive but did not have the disease. Sensitivity=(True Positives (A))/(True Positives (A)+False Negatives (C)) The ability to correctly classify a test is essential, and the equation for sensitivity is the following: In other words, it is the ability of a test or instrument to yield a positive result for a subject that has that disease. Sensitivity is the proportion of true positives tests out of all patients with a condition. (See Diagnostic Testing Accuracy Table 1) A diagnostic test’s validity, or its ability to measure what it is intended to, is determined by sensitivity and specificity. The values within this table can help to determine sensitivity, specificity, predictive values, and likelihood ratios. The presentation of diagnostic exam results is often in 2x2 tables, such as Table 1. Providers should utilize diagnostic tests with the proper level of confidence in the results derived from known sensitivity, specificity, positive predictive values (PPV), negative predictive values (NPV), positive likelihood ratios, and negative likelihood ratios. Sensitivity and specificity are essential indicators of test accuracy and allow healthcare providers to determine the appropriateness of the diagnostic tool. Unfortunately, many order tests without considering the evidence to support them. The utilization of diagnostic tests in patient care settings must be guided by evidence.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed